Benefits

- Zero-Copy architecture: Read tables managed by Databricks Unity Catalog without moving or duplicating data.

- Maintained governance: Databricks Workspace Admins can retain catalog- and table-level access control when reading their Databricks catalogs.

- Secure federation: Connect securely using Databricks authentication credentials.

- Accelerated innovation on the lakehouse: Take spatial ideas to market faster using Wherobots’ 300+ spatial functions, raster inference, and compute for physical world data on your Unity Catalog data.

Supported workflows

Wherobots’ integration with Databricks Unity Catalog supports the following workflows:| Read Source (Unity Catalog) | Write Destination | Required Databricks Authentication | Documentation |

|---|---|---|---|

| Managed Delta Table | Wherobots-Managed Catalog | Personal Access Token (PAT) (assigned to an individual or Service Principal) | Workflow Configuration |

| Managed Delta Table | External Delta Table (in Unity Catalog) | Personal Access Token (PAT) (assigned to an individual or Service Principal) | Workflow Configuration |

| Managed Iceberg Table | Wherobots-Managed Catalog | Service Principal with OAuth | Workflow Configuration |

| Managed Iceberg Table | Managed Iceberg Table (in Unity Catalog) | Service Principal with OAuth | Workflow Configuration |

Setup and configuration

Before you start

Before you can use this feature, make sure you have the following:- A Wherobots Account within a Professional, Innovation, or Enterprise Edition Organization. Your Account needs to be assigned an Admin role to create a Connection.

- A pre-existing Managed Delta or Managed Iceberg table within the Databricks platform.

- A pre-existing Unity Catalog in Databricks.

- The necessary permissions in Databricks, as described below.

Creating the Connection

Databricks permissions

The permissions you need depend on your read/write workflow.- Writing to Wherobots

- Writing to Databricks Unity Catalog

-

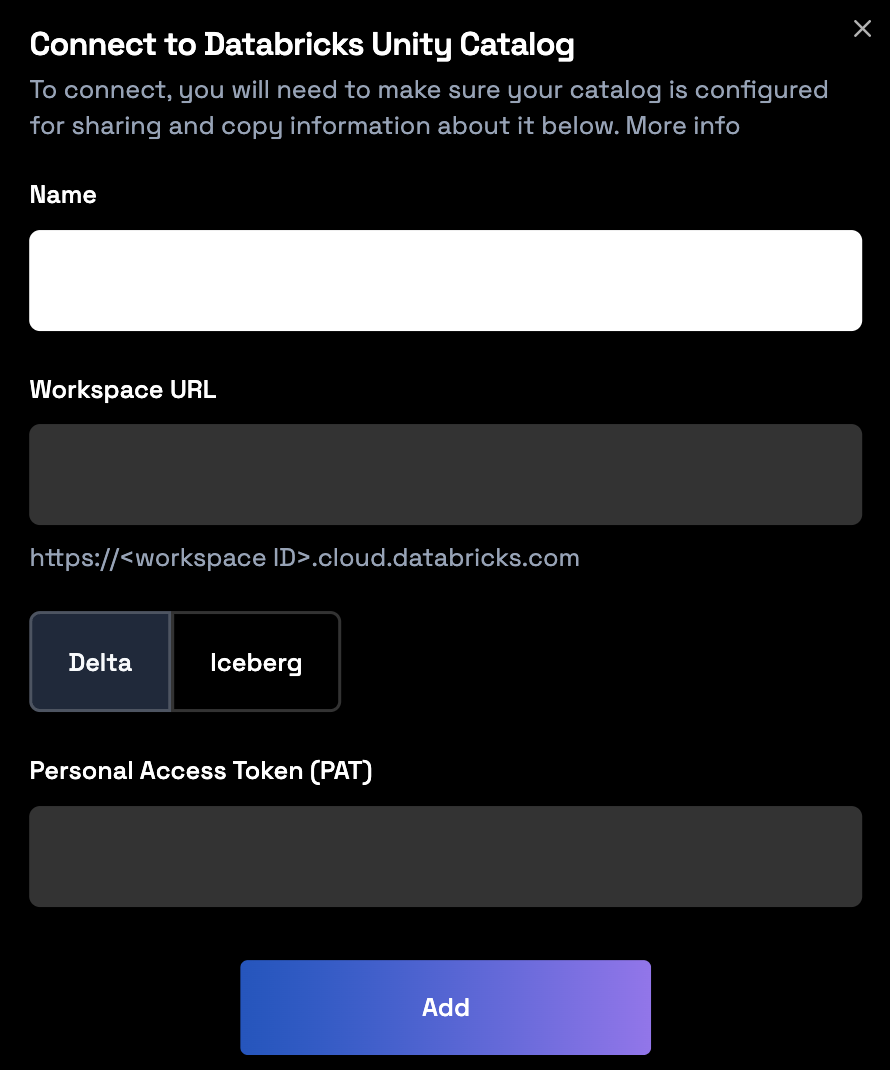

If you’re reading from a Managed Delta Table and writing to a Wherobots-managed Catalog:

- Create a Personal Access Token (PAT).

-

The following permissions are required:

Permission Granted On (Object Type) Target / Scope USE CATALOGCatalog The catalog containing the source Delta table USE SCHEMASchema The schema containing the source Delta table SELECTTable The source Delta table being read CAN USEService principal or individual -

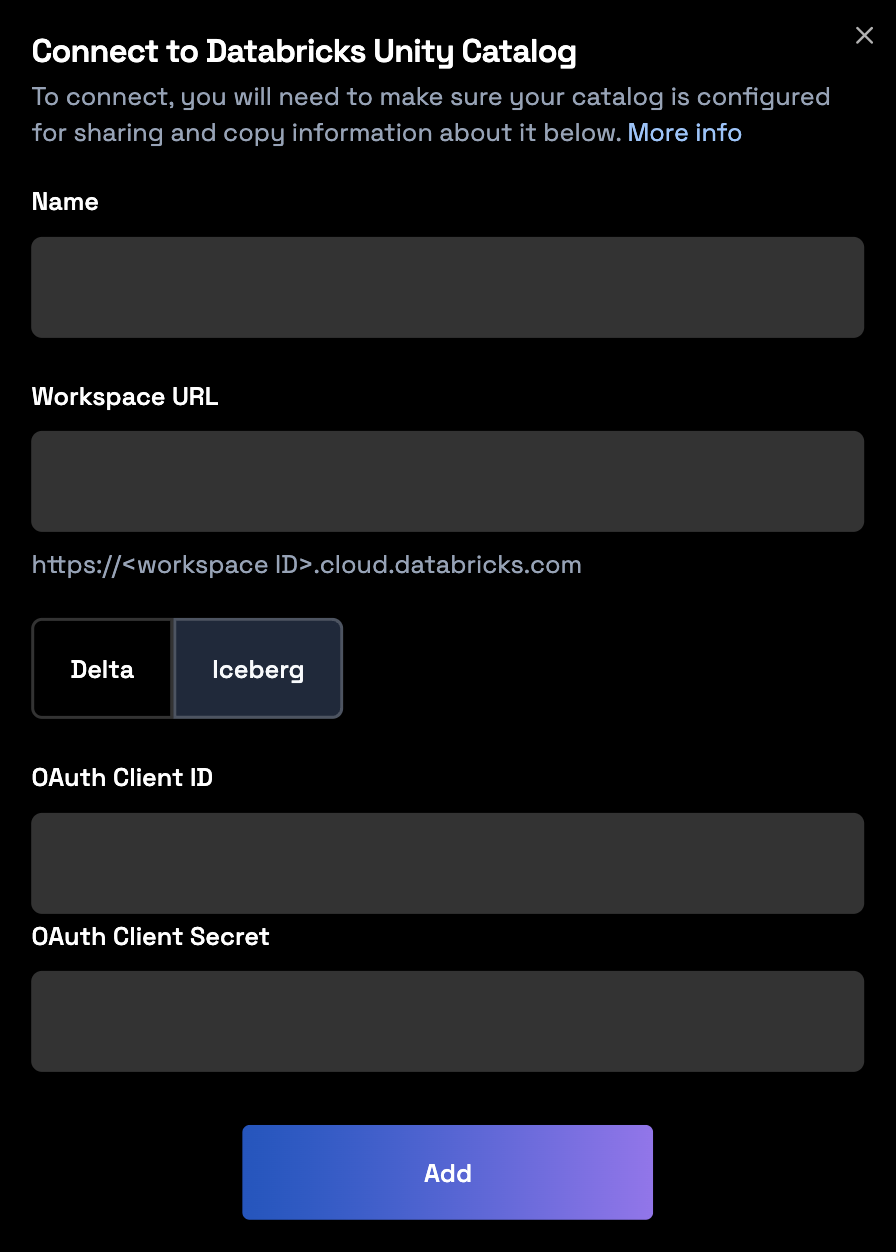

If you’re reading from a Managed Iceberg Table and writing to a Wherobots-managed Catalog:

- Use a Service Principal with OAuth. For more information, see Authorize service principal access to Databricks with OAuth in the Official Databricks Documentation.

- Record the

<uc-catalog-name>,<workspace-url>,<oauth_client_id>, and<oauth_client_secret>for the Wherobots UI.

-

The following permissions are required:

Permission Granted On (Object Type) Target / Scope USE CATALOGCatalog The catalog containing the source Iceberg table USE SCHEMASchema The schema containing the source Iceberg table SELECTTable The source Iceberg table being read EXTERNAL USE SCHEMASchema The schema containing the source Iceberg table READ VOLUMEExternal Volume The volume where the source table’s files are stored

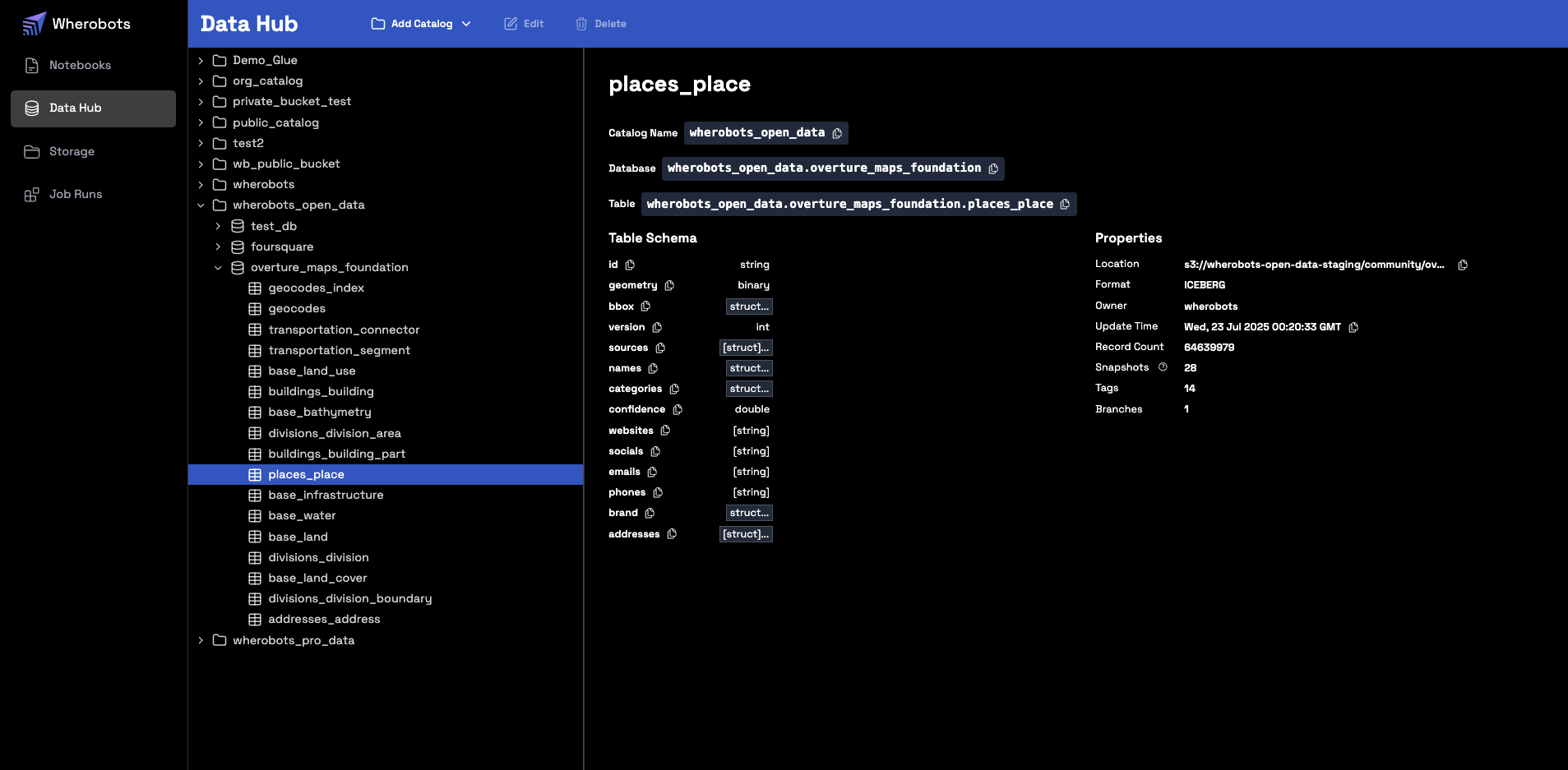

Add the catalog in Wherobots

- Navigate to the Data Hub in your Wherobots Organization.

-

Click Add Catalog.

- Select either Delta or Iceberg, depending on the format of the source table you are connecting to.

- Enter the required information. The Name must exactly match the catalog name in your Databricks Workspace.

- For Delta tables

- For Iceberg tables

-

Enter your Personal Access Token (PAT) and Workspace URL.

-

Click Add.

Runtime Restart Required After Data IntegrationTo use new storage integrations or catalogs in your notebooks, you must start a new runtime. Notebooks can only access storage integrations or catalogs that were created before the runtime started.

Reading and writing Unity Catalog tables

You can access your Unity Catalog Tables in a Wherobots Notebook, Job Run, or SQL Session. The following sections detail how to work with your Unity Catalog tables in a Wherobots Notebook.Set the SedonaContext

In a Wherobots Notebook, create theSedonaContext and import any other necessary libraries for your analysis.

The following imports the necessary modules from the Sedona library, creates a SedonaContext object, and

imports expr.

Set your Databricks Resource Variables

Define the resources that point to your Databricks resources:Reading from a Delta Table

- Writing to a Wherobots-managed catalog

- Writing to an External Delta Table in Unity Catalog

Reading from an Iceberg Table

- Writing to a Wherobots-managed Catalog

- Writing to a new Managed Iceberg Table in Unity Catalog

Usage and limitations

- Catalog Naming: You cannot use a local alias for a catalog. If you have a pre-existing catalog in your Wherobots Organization named

wherobots, trying to connect a Databricks catalog with the namewherobotswill cause a permanent naming conflict and must be avoided. - Catalog Limit: The integration supports a limit of 10 foreign catalogs per Organization.

- UniForm: If you use Databricks’ Universal Format (UniForm) to enable Iceberg reads on a Delta table, that table will be read-only.

Workflows explained

The following table provides a detailed summary of each workflow and its intended use case.| Use Case | Read Source (Unity Catalog) | Write Destination |

|---|---|---|

Preserve GEOMETRY columns for continued complex spatial analysis and visualization within the Wherobots environment. | Managed Delta Table | Wherobots-Managed Catalog |

| Generate spatial features for AI and BI in Databricks. Complete complex spatial analysis in Wherobots and write spatially-enriched feature columns back to Unity Catalog for use in Databricks’ ML models and BI dashboards. | Managed Delta Table | External Delta Table (in Unity Catalog) |

Preserve GEOMETRY columns for continued complex spatial analysis and visualization within the Wherobots environment. | Managed Iceberg Table | Wherobots-Managed Catalog |

| Generate spatial features for AI and BI in Databricks. Complete complex spatial analysis in Wherobots and write spatially-enriched feature columns back to Unity Catalog for use in Databricks’ ML models and BI dashboards. | Managed Iceberg Table | Managed Iceberg Table (in Unity Catalog) |